Scammers are taking advantage of AI to carry out their schemes. With recent voice cloning technologies that sound shockingly real, they trick people into believing they are speaking with someone they know — often asking for money, personal information, or urgent help. Because these cloned voices are so convincing, many victims fall for the scam.

In this article, we will share real stories of victims deceived by voice cloning, explain how to detect this type of scam, and provide tips on how to protect yourself.

Scammers Target Thailand’s Prime Minister

Thailand’s Prime Minister, Paetongtarn Shinawatra, has warned the public about AI-powered scams at a budget policy meeting on January 15. She revealed that a scammer used AI to mimic the voice of a leader from a neighboring country. The fraudster sent her a voice message through a chat app, claiming to be the leader and expressing interest in international cooperation. Later, the scammer attempted to solicit a donation, falsely stating that Thailand was the only ASEAN nation yet to contribute.

“This voice message made me realize that I was being deceived. The bank account number provided did not belong to the neighboring country either,” Ms. Paetongtarn said.

Realizing it was a scam, Ms. Paetongtarn ordered an investigation by Digital Economy and Society Minister Prasert Chantararuangthong, who is also Deputy Prime Minister.

She suspects the scammers obtained her contact from someone close to her who had already fallen victim to that scammer. Urging the public to stay alert, she warned against trusting voice messages or calls, even if they sound real [1].

Attorney Nearly Fooled by AI Voice Scam

Gary Schildhorn, an experienced fraud attorney, almost fell victim to a voice cloning scam. He received a call from someone who sounded exactly like his son, Brett, claiming he had been in a car accident, injured, and arrested. Without hesitation, Schildhorn rushed to the bank to withdraw $9,000 to help. On his way to the bank, he called his son’s wife.

As he updated Brett’s wife, his real son called via FaceTime, revealing the scam. Shocked, Schildhorn reported the incident to law enforcement, but the scammers were untraceable because they were using burner phones to get cryptocurrency [2].

How Voice Cloning Works

Voice cloning technology uses artificial intelligence (AI) to replicate human voices with remarkable accuracy. This is achieved by training AI models on recorded speech, allowing them to generate synthetic voices that closely mimic real individuals. Scammers exploit this technology to impersonate trusted figures and deceive victims into handing over money or sensitive information.

Common Voice Cloning Scams

Scammers are leveraging AI-powered voice cloning to deceive victims into sending money or revealing sensitive information. Here are some of the most common schemes [3]:

- Fake emergency calls: Fraudsters mimic the voice of a family member, often pretending to be in distress due to an accident or legal trouble, pressuring loved ones to send money immediately.

- Executive impersonation: Cybercriminals impersonate company executives, like CEOs, to instruct employees to transfer funds urgently, exploiting their authority to bypass standard verification procedures.

- Phony customer support calls: Scammers pose as bank representatives or tech support agents using cloned voices to convince victims that their accounts are at risk, tricking them into providing personal details.

How to Spot a Voice Cloning Scam

Here are some red flags to recognize a potential AI voice cloning scam [4][5]:

- Requests for sensitive information: Legitimate banks and organizations will never ask for passwords or account details over the phone.

- Unverified or hidden caller ID: Scammers often hide their phone numbers.

- Spoofed caller ID: Fraudsters manipulate caller ID to make it appear as if the call is coming from a trusted source, such as a bank or a family member.

- Generic or vague language: Scammers avoid using specific details about your account or personal life, instead relying on broad statements to create urgency.

- Emotional manipulation: Calls often involve distressing stories, such as a loved one in trouble, to trigger fear and force quick action.

How to Protect Yourself from Voice Cloning Scams

Scammers are becoming more sophisticated, but there are steps you can take to safeguard yourself and reduce your chances of falling victim [4][5]:

- Limit your digital footprint: Avoid sharing personal videos or voice recordings online, as these can be used for AI voice cloning.

- Keep an eye out: Be cautious of unexpected calls that pressure you into making quick decisions.

- Strengthen verification methods: Use multi-factor authentication (MFA/2FA) for important accounts for you and your family members.

- Create a family safe word: Choose a unique phrase that is difficult to guess, and require it to verify the identity of a loved one in distress.

Eydle protects Your Company’s Online Presence

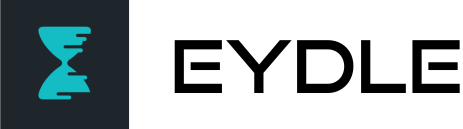

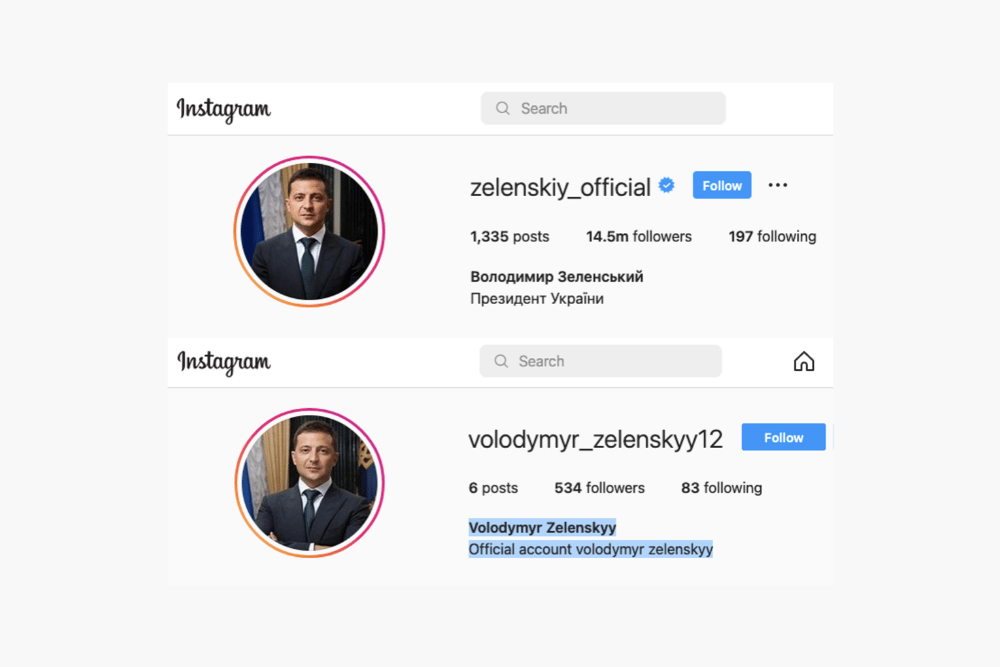

AI scams don’t stop at phone calls — phishing attacks on social media are just as dangerous. Fraudsters use fake profiles and hacked accounts to manipulate victims into handing over sensitive information.

At Eydle, we use cutting-edge AI to detect and stop phishing scams on social media. Our technology analyzes social media profiles, Direct Messages (DMs), websites, app stores, and dark web and detects phishing attempts before they reach your customers.

Learn more at www.eydle.com or contact us at [email protected]

Sources:

- https://www.straitstimes.com/asia/se-asia/thai-pm-paetongtarn-almost-fooled-by-asean-leader-seeking-money-in-ai-voice-scam

- https://www.cbsnews.com/newyork/news/ai-voice-clone-scam/

- https://www.bitdefender.com/en-us/blog/hotforsecurity/how-to-spot-a-voice-cloning-scam

- https://www.cbsnews.com/news/elder-scams-family-safe-word/

- https://www.bitdefender.com/en-us/blog/hotforsecurity/how-to-spot-a-voice-cloning-scam